Root Systems

Any root system must be symmetric. For any reflection $R_x^2=1$ and thus $R_x(R)=R$, i.e. every element of the Weyl group just permutes the roots. $W_R$ must therefore be isomorphic to both a sub-group of the group of permutations of $R$ and a sub-group of $\OO(E)$. Geometrically the forth condition says that for any pair of roots $x,y$ twice the component of $y$ in the direction $x$ is an integer. Moreover $$ R_x(y)=y-\frac{2\la x,y\ra}{\Vert x\Vert^2}x=y-nx \quad\mbox{for some $n\in\Z$}~. $$ Suppose both $(R,E)$ and $(S,F)$ are root systems, then $R\cup S$ is a root system in $E\oplus F$. We simply check two details: 1. For $x\in R$ and $y\in S$ we have $R_x(y)\in R\cup S$, which follows from the fact that $x\perp y$ and thus $R_x(y)=y$. 2. For $x\in R$ and $y\in S$ we have $2\la x,y\ra/\Vert x\Vert^2\in\Z$, which is obvious, as $\la x,y\ra=0$.

A root system $(R,E)$ is said to be reducible if there exists non trivial root systems $(R_1,E_1)$ and $(R_2,E_2)$, such that $R=R_1\cup R_2$ and $E=E_1\oplus E_2$. If $R$ is not reducible it`s called irreducible.

Two root systems $(R,E)$ and $(S,F)$ are said to be isomorphic if there exists an isometry $U:E\rar F$, such that $U(R)=S$ and for all $x\in R$: $UR_x=R_{Ux}U$.

$\proof$

Put $m\colon=2\la x,y\ra/\Vert x\Vert^2$ and $n\colon=2\la y,x\ra/\Vert y\Vert^2$, then $m,n\in\Z$ and denoting by $\a$ the angle between $x$ and $y$: $mn=4\cos^2\a$ and $n/m=\Vert x\Vert^2/\Vert y\Vert^2\geq1$. This implies that $|m|\leq|n|$ and $mn\in\{0,1,2,3,4\}$ and thus $\cos^2\a\in\{0,1/4,1/2,3/4,1\}$. However $\cos^2\a=1$ implies that $x$ and $y$ are col-linear. Therefore $\cos^2\a\in\{0,1/4,1/2,3/4\}$ and $mn\in\{0,1,2,3\}$.Suppose $mn=3$; since $|m|\leq|n|$ we must have: $(|m|,|n|)=(1,3)$, which implies that $\Vert x\Vert^2/\Vert y\Vert^2=|n|/|m|=3$. The other cases are similar. $\eofproof$ $\proof$ In any case $x$ and $y$ are not col-linear and they are not orthogonal to each other. Moreover, w.l.o.g. we may assume that $\Vert x\Vert\geq\Vert y\Vert$. This implies under the first condition that $m,n\in\N$, $m\leq n$ and $mn\in\{1,2,3\}$. The only possible value for $m$ is $1$. Therefore $R_x(y)=y-mx=y-x$. The second case is similar. $\eofproof$ $\proof$ Let $x^\vee\colon=2x/\Vert x\Vert^2$ be the co-root of $x\in R$. We only check the forth property: $$ \frac{2\la x^\vee,y^\vee\ra}{\Vert x^\vee\Vert^2} =\frac{8\la x,y\ra\Vert x\Vert^4}{4\Vert x\Vert^2\Vert y\Vert^2\Vert x\Vert^2} =\frac{2\la x,y\ra}{\Vert y\Vert^2}\in\Z $$ Moreover $$ \Big(\frac{2\la x^\vee,y^\vee\ra}{\Vert x^\vee\Vert^2},\frac{2\la x^\vee,y^\vee\ra}{\Vert y^\vee\Vert^2}\Big) =\Big(\frac{2\la x,y\ra}{\Vert y\Vert^2},\frac{2\la x,y\ra}{\Vert x\Vert^2}\Big) =(n,m)\in\Z^2~. $$ $\eofproof$

Bases and Weyl Chambers

Base of a root system

A subset $B$ of a root system $R$ in $E$ is said to be a base if

Does every root system $R$ contain a base? We will actually prove a little bit more: if $B$ is a basis for $E$, then there is some unit vector $h\in E$ such that $B\sbe[\la.,h\ra > 0]$. In the sequel we will show that given a unit vector $h\in E$ such that $R\cap[\la.,h\ra = 0]=\emptyset$ there is exactly one basis $B\sbe R^+\colon=R\cap[\la.,h\ra > 0]$ such that

$$

R^+\sbe\sum_{b\in B}\N_0 b~.

$$

This shows that $B$ is a base iff $B$ is a basis and there is some unit vector $h$ such that $R=R^+\cup -R^+$, where $R^+=R\cap[\la.,h\ra > 0]$ and $R^+\sbe\sum_{b\in B}\N_0b$. Hence an obvious candidate for a base is a set of minimal cardinality in

$$

\B\colon=\Big\{B\sbe R^+:R^+\sbe\sum_{b\in B}\N_0 b\Big\}

$$

which exists, because $R^+\in\B$ and thus $\B$ is not empty. Let`s denote a minimal set by $B$. Since $R=R^+\cup -R^+$ and $R$ spans $E$ we have $\lhull{B}=E$. Moreover, minimality implies that:

$$

\forall x\in B:\quad x\notin\sum_{b\in B\sm\{x\}}\N_0 b~.

$$

We say that every vector in $B$ is in-decomposable in $B$.- $B$ is a basis for the vector space $E$.

- For each $x\in R$ either $x\in\sum_{b\in B}\N_0 b$ or $-x\in\sum_{b\in B}\N_0 b$.

Next we will show an important property of a minimal set $B$: 1. For all $b_1\neq b_2\in B$ we have $\la b_1,b_2\ra\leq0$.

Assume to the contrary that for some pair $b_1\neq b_2$: $\la b_1,b_2\ra > 0$. Since $b_1\neq b_2$ we infer that the angle between $b_1$ and $b_2$ is in the range $(0,\pi/2)$. By corollary we may assume w.l.o.g. that $b_1-b_2\in R^+$; i.e. $$ (1-n(b_1))b_1-b_2=\sum_{b\neq b_1}n(b)b~. $$ If $n(b_1) > 0$, then $0 < \la(1-n(b_1))b_1-b_2,h\ra=\sum_{b\neq b_1}n(b)\la b,h\ra\geq0$. Otherwise $b_1$ is not in-decomposable.

2. If $B\sbe[\la.,h\ra > 0]$ is a collection of non zero vectors such that for all $b_1\neq b_2\in B$: $\la b_1,b_2\ra\leq0$, then $B$ is linearly independent: Suppose $0=\sum_b n(b)b$ and put $$ x\colon=\sum_{[n > 0]}n(b)b=\sum_{[n < 0]}-n(b)b~. $$ Then, as $[n > 0]\cap[n < 0]=\emptyset$ we get by assumption: $$ \Vert x\Vert^2=\sum_{n(b_1) > 0,n(b_2) < 0} -n(b_2)n(b_1)\la b_1,b_2\ra\leq0, $$ i.e. $x=0$ and thus: $\sum_{n > 0}n(b)\la b,h\ra=0$, which implies that $n(b)=0$ for all $b$.

By 1. and 2. any minimal set $B$ in $\B$ is indeed a base for $R$ and thus it remains to verify uniqueness: Suppose $D\sbe R^+$ is another base, then any $b\in B$ is in $\sum_{d\in D}\N_0d$ and any $b\in B$ is in $\sum_{b\in B}\N_0b$. Thus there are matrices $X,Y\in\Ma(\dim E,\N_0)$ such that $XY=1$. But the only matrices with this properties are permutation matrices, i.e. $D$ is just a permutation of $B$.

Of course, a base $B$ is in-decomposable, the following exam is about a somewhat stronger property: Assume $b=\sum_{x\in R_1^+}\a(x)x$ for some subset $R_1^+\sbe R^+$ with $\a(x) > 0$. Now for all such $x$ we have: $$ x =\sum_{c\in B} n_x(c)c \quad\mbox{where $n_x(c)\in\N_0$} $$ and thus $$ b =\sum_{x\in R_1^+}\sum_{c\in B}\a(x)n_x(c)c =\sum_{c\in B}\Big(\sum_{x\in R_1^+}\a(x)n_x(c)\Big)c~. $$ Since $B$ is a basis, we must have $\sum_{x}\a(x)n_x(b)=1$ and for all $c\neq b$: $\sum_{x}\a(x)n_x(c)=0$. Hence for all $c\neq b$ and all $x\in R_1^+$: $\a(x)n_x(c)=0$, i.e. $n_x(c)=0$. The only possible non zero coefficient in the expansion of any $x\in R_1^+$ is $n_x(b)$. This means that $x=n_x(b)b$, but the only multiple of $b$, which is in $R^+$ is $b$. Choose a unit vector $h\in E$ such that $\la r,h\ra > 0$ and for all $x\in R^+\colon=R\cap[\la.,h\ra > 0]$ different from $r$: $\la x,h\ra > \la r,h\ra$. Then $$ r\notin\sum_{x\in R^+}\N_0x, $$ for $r=\sum n(x)x$ implies $\la r,h\ra=\sum n(x)\la x,h\ra > (\sum n(x))\la r,h\ra$, i.e. $\sum n(x) < 1$. Suppose $r\in R^+\sm\{b\}$, then for some non empty subset $B^\prime$ of $B\sm\{b\}$: $$ r=\sum_{x\in B^\prime}c(x)x+c(b)b \quad\mbox{and}\quad c(x)\in\N, c(b)\in\N_0~. $$ Now for each $r\in R$: $R_b(r)=r-nb$ for some $n\in\Z$ and therefore $$ R_b(r)=\sum_{x\in B^\prime}c(x)x+(c(b)-n)b\in R $$ As $R=R^+\cup R^-$, $B^\prime\neq\emptyset$ and $c(x) > 0$, $R_b(r)$ cannot belong to $R^-$. Hence we must have $R_r(r)\in R^+$, i.e. we must also have $c(b)\geq n$. If $B$ is a base for $R$, then it`s not at all obvious that $B^\vee$ is a base for $R^\vee$, because if $r=\sum n(b)b\in R^+$, then $$ r^\vee =\sum_{b\in B}\frac{2\sum n(b)b}{\Vert r\Vert^2} =\sum_{b\in B}\frac{n(b)\norm b^2}{\Vert r\Vert^2}b^\vee $$ However the statement is true and thus in particular $$ \forall b\in B\,\forall r=\sum n(b)b\in R^+:\quad \frac{n(b)\norm b^2}{\Vert r\Vert^2}\in\N_0~. $$ $\proof$ By proposition we know that $R^\vee$ is a root system in $E$. As $B$ is a basis for $E$, $B^\vee$ is a basis for $E$ and if $B\sbe[\la .,h\ra > 0]$, then $B^\vee\sbe[\la .,h\ra > 0]$. Moreover, the set of positive roots of $R^\vee$ coincides with the set $R^{+\vee}\colon=\{x^\vee:x\in R^+\}$ and therefore there is a unique base $C\sbe R^{+\vee}$ for the root system $R^\vee$ such that $$ R^{+\vee}\sbe\sum_{c\in C}\N_0c~. $$ Assume that there is some $x\in R^+\sm B$ such that $x^\vee\in C$. Now $x$ can be written as $x=\sum_{b\in B}n(b)b$ - at least two of the coefficients $n(b)\in\N_0$ are different from $0$. Thus $$ x^\vee =\sum_{b\in B}\frac{2n(b)}{\Vert x\Vert^2}b =\sum_{b\in B}\frac{n(b)\Vert b\Vert^2}{\Vert x\Vert^2} b^\vee \in\sum_{b^\vee\in B^\vee}\R_0^+b^\vee~. $$ But by exam any $x^\vee$ in the base $C$ cannot lie in the positive convex cone generated by $R^{+\vee}\sm\{x^\vee\}$, which is a superset of $B^\vee$. Hence our assumption that there is some $x\in R^+\sm B$ such that $x^\vee\in C$ is false, i.e. $C\sbe B^\vee$ and as both are bases: $C=B^\vee$. $\eofproof$

$H_1$ and $H_2$ form a base for the root system $\{\pm H_1,\pm H_2,\pm H_3\}$ of $\sla(3,\C)$, cf. e.g. section.

Find a base $B$ of the root system of $\sla(n,\C)$ given in exam and describe $R^+$. Show that for any vector $x=\sum x_je_j$ such that $x_1 > x_2 > \cdots > x_n$ we have $R^+=\{r\in R:\la r,x\ra > 0$.

$E\colon=\{x\in\R^n:\la x,N\ra=0\}$, where $N=e_1+\cdots+e_n$. Put for $k=1,\ldots,n-1$: $b_k\colon=e_k-e_{k+1}$. Then for $j < k$: $e_j-e_k=b_j+\ldots+b_{k-1}$, i.e. $b_1,\ldots,b_{n-1}$ is a base and $R^+=\{e_j-e_k:j < k\}$. Finally for $j < k$: $\la e_j-e_k,x\ra=x_j-x_k > 0$.

Referring to exam we conclude from the above exam: $H_1=E^{11}-E^{22},\ldots,H_{n-1}=E^{n-1,n-1}-E^{nn}$.

$E=\R^n$. Put for $k=1,\ldots,n-1$: $b_k\colon=-e_k+e_{k+1}$ and $b_n=e_1+e_2$. Then $-e_j+e_k=b_j+\ldots+b_{k-1}$, $e_1+e_k=b_n+(-e_1+e_k)$ and for $j=2,\ldots,k-1$: $e_j+e_k=(e_{j-1}+e_k)+b_{j-1}$.

The dual root system of $\so(2n+1,\C)$ is isometric to the root system of $\spa(n,\C)$. Cf. exam and exam

Weyl chambers

Given a root system $R$ in $E$ an open Weyl chamber is a connected component of the set

$$

E\sm\bigcup_{r\in R}[\la.,r\ra=0]~.

$$

If $B$ is a base for $R$, then the sets

$$

C\colon=\bigcap_{b\in B}[\la.,b\ra\geq0]

\quad\mbox{and}\quad

C^\circ=\bigcap_{b\in B}[\la.,b\ra > 0]

$$

are said to be the closed and open fundamental Weyl chambers in $E$ relative to $B$. Cf. H. Weyl.

Since any base $B$ is a basis, a fundamental Weyl chamber $C$ is a convex cone with vertex $0$ and non empty interior $C^\circ$, thus $C^\circ$ is an open convex cone - hence it`s connected. We claim that for all roots $r$ the function $x\mapsto\la x,r\ra$ doesn`t change sign on $C^\circ$: indeed, $r=\pm\sum_{b\in B_1}n(b)b$, $\emptyset\neq B_1\sbe B$, $n(b) > 0$ and for all $x\in C^\circ$: $\la x,b\ra > 0$. Hence for all $r\in R$: $[\la.,r\ra=0]\cap C^\circ=\emptyset$ and this shows that $C^\circ$ is an open Weyl chamber limited by the hyperspaces $[\la.,b\ra=0]$, $b\in B$. So open fundamental Weyl chambers are open Weyl chambers.Also, since $B$ is a base: $R^+\sbe\sum_{b\in B}\N_0b$ and thus for all $x\in C^\circ$ and all $r\in R^+$: $\la x,r\ra > 0$, i.e. \begin{equation}\label{bwceq1}\tag{BWC1} R^+\sbe R\cap\bigcap_{c\in C^\circ}[\la x,.\ra > 0]~. \end{equation} Next we will show that the second inclusion is in fact an equality. We need the following result, which is just a special case of the bi-polar theorem in locally convex spaces. However, we don`t need to invoke the Hahn-Banach theorem, because in a real Hilbert space $E$ for any closed convex set $C$ and any point $x_0\neq0$, $x_0\notin C$, there is a vector $y\in E$ such that $\la x_0,y\ra < -1$ and for all $x\in C$: $\la x,y\ra\geq-1$: for $y$ you may choose a multiple of $x_1-x_0$, where $x_1\in C$ is the best approximation of $x_0$ within $C$! $\proof$ Obviously, $A^p,C^p$ are closed and convex and $A^p\spe C^p$.

1. Clearly $A^{pp}$ is a closed convex subset containing $A$ and $0$, i.e. $C\sbe A^{pp}$. Assume that there is some point $x_0\in A^{pp}$ such that $x_0\notin C\colon=\cl{\convex{A\cup\{0\}}}$. Then there is a vector $y_0\in E$ such that: $$ \la x_0,y_0\ra < -1,\quad\mbox{and}\quad \forall x\in C:\quad\la x,y_0\ra\geq-1~. $$ Hence $y_0\in C^p=A^p$ and thus $x_0\notin A^{pp}$.

2. Again, if $y_0\in A^p\sm C^p$, then there is $x_0\in E$ such that $\la y_0,x_0\ra < -1$ and for all $y\in C^p$: $\la y,x_0\ra\leq-1$, i.e. $x_0\in C^{pp}$ and $x_0\notin A^{pp}$, which is impossible by 1.

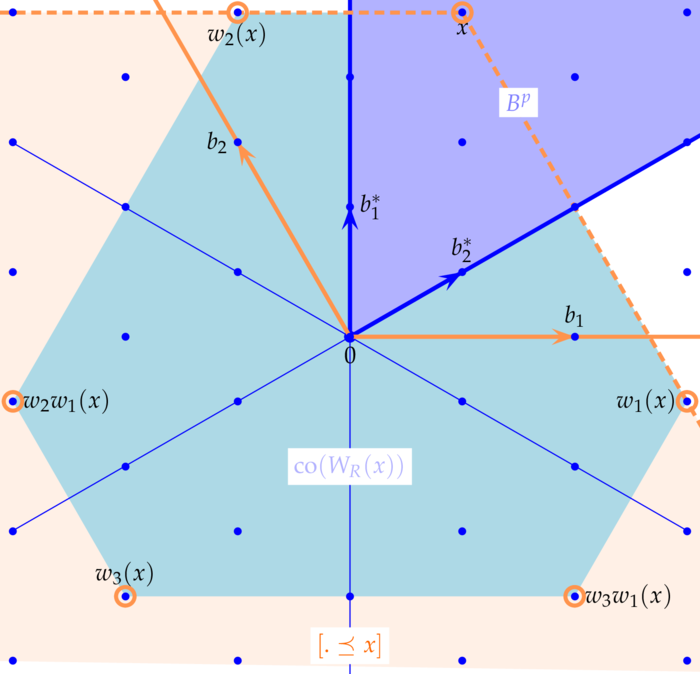

3. If $A$ is a cone with vertex $0$, then for all $x\in A$ and all $\l > 0$: $\l x\in A$. Hence for all $y\in A^p$: $\la x,y\ra\geq -1/\l$, i.e. $\la x,y\ra\geq0$. $\eofproof$ By definition we have for the fundamental Weyl chamber $C$ relative to the base $B$: $$ C=B^p=R^{+p} \quad\mbox{and}\quad \sum_{b\in B}\R_0^+b =\cl{\convex{B\cup\{0\}}} =B^{pp}~. \quad\mbox{i.e.}\quad \sum_{b\in B}\R^+b=B^{pp}\sm\{0\}~. $$ $\proof$ Let $D$ be the closed convex cone generated by $R^+$, then $R^+=R\cap D$ and by lemma: $C^p=D$. Hence $C^p\cap R=R^+$. Since $C$ is closed and convex with non empty interior, we have: $\cl{C^{\circ}}=C$ and therefore by lemma: $C^{\circ p}=C^p$. It follows that $$ \bigcap_{x\in C^\circ}[\la x,.\ra > 0] \sbe\bigcap_{x\in C^\circ}[\la x,.\ra\geq0] =C^{\circ p} =C^p~. $$ On the other hand we always have by \eqref{bwceq1}: $R^+\sbe R\cap\bigcap_{c\in C^\circ}[\la x,.\ra > 0]$. $\eofproof$ Not all subsets of a root system $R$, which form a basis of the vector space $E$, constitute a base for $R$. However, the subsequent result shows that every open Weyl chamber $W$ of $R$ is an open fundamental Weyl chamber: $\proof$ Choose $x_0\in C$, then the hyperspace $[\la.,x_0\ra=0]$ doesn`t contain any root. Hence there is a base $B$ of $R$ such that $B\sbe[\la.,x_0\ra > 0]$. On the other hand for all roots $r$ the functions $x\mapsto\la r,x\ra$ don`t change sign on $C$ and as these signs are $+1$ at $x_0$ for all $r=b\in B$ these signs are $+1$ for all $b\in B$ and all $x\in C$. Hence $C\sbe B^p$ and as $C$ is a connected component: $C=B^{p\circ}$

We are left to prove uniqueness: Suppose $D$ is another base, then $D^p=B^p$ and therefore both bases have the same system of positive roots. But this system of positive roots contains just one base. $\eofproof$ As any $w\in W_R$ maps a base onto a base, $w(B)$ is a base and $y\in w(B)^p$ iff for all $b\in B$: $0\leq\la x,w(b)\ra=\la w^{-1}(x),b\ra$, i.e. iff $w^{-1}(x)in B^p$.

Weyl Chambers and the Weyl group

Put $\dim E=n+1$ and let us denote by $S^n$ the unit sphere $\{x\in E:\Vert x\Vert=1\}$. We look at the set $\Sigma_n$ of all intersection $C\cap S^{n-1}$ of Weyl chambers $C$ of a root system $R$ in $E$. Each of these sets is limited by $n+1$ hyperspaces and is thus an $n$-simplex on the sphere $S^n$. The set $\Sigma$, consisting of all sub-simplices of $\Sigma_n$ is a so called triangulation of $S^n$, i.e. the intersection of two simplices in $\Sigma$ is again a simplex in $\Sigma$. Moreover the pair $(S^n,\Sigma)$ is a pseudo-manifold, which means that:- Every simplex is a sub-simplex of an $n$-simplex.

- Every $(n-1)$-simplex is a sub-simplex of exactly two $n$-simplices.

- For each pair $S,T$ of $n$-simplices there is a sequence $S,S_1,\ldots,S_k,T$ of $n$-simplices, such that each pair of consecutive $n$-simplices has a common $(n-1)$-simplex.

The common $(n-1)$-simplex of two $n$-simplices is part of a common limiting hyperspace $[\la .,b\ra=0]$, where $b$ is an element of a base $B$. Reflecting one of the simplices about this limiting hyperplane maps them onto the other. Hence for each pair of simplices - or equivalently Weyl chambers $C_1,C_2$ - there is an element $w$ of the Weyl group such that $w(C_1)=C_2$. In other words the Weyl group $W_R$ acts transitively on the set of open (closed) Weyl chambers - equivalently: $W_R$ acts transitively on the set of $n$-simplices $\Sigma_n$. The subsequent result provides an apparently stronger statement. $\proof$ Let $S=B^p\cap S^n,T$ be any pair in $\Sigma_n$, $x\in S^\circ$ and $y\in T^\circ$. Choose $w\in W_B$, which minimizes $$ f(w)\colon=\norm{w(y)-x}^2 $$ We simply have to verify that $w(y)\in B^p$, because this means: $w(T^\circ)\cap S\neq\emptyset$ and thus $w(T)=S$. Assume to the contrary that $w(y)\notin B^p$, then there is some $b\in B$ such that $\la w(y),b\ra < 0$. Now compute \begin{eqnarray*} f(R_bw)-f(w) &=&1+1-1-1 -2\la R_bw(y),x\ra+2\la w(y),x\ra\\ &=&-2\Big\langle w(y)-\frac{2\la w(y),b\ra}{\norm b^2} b,x\Big\rangle+2\la w(y),x\ra =4\frac{\la w(y),b\ra\la b,x\ra}{\norm b^2} < 0 \end{eqnarray*} i.e. $w$ is not a minimizer. $\eofproof$ Of course $W_B$ is a sub-group of $W_R$ and thus the statement of the previous lemma is stronger than what we explained before. However, we can now prove: $\proof$ By exam each $r\in R$ is element of some Base $D$. As the group $W_B$ acts transitively on the Weyl chambers (by lemma), it acts transitively on the set of bases, i.e. there is some $w\in W_B$ such that $D=w(B)$ and thus $r=w(b)$. Now - assume w.l.o.g $\norm b=1$: \begin{eqnarray*} R_{w(b)}(x) &=&x-2\la x,w(b)\ra w(b) =ww^{-1}(x)-2\la w^{-1}(x),b\ra w(b)\\ &=&w\Big(w^{-1}(x)-2\la w^{-1}(x),b\ra b\Big) =wR_bw^{-1}(x), \end{eqnarray*} which shows that $R_r\in W_B$. $\eofproof$ By lemma the Weyl group acts transitively on the set of Weyl chambers. The following result implies that it also acts freely! Hence, if $w(C)=C$, then $w=1$, i.e. the Weyl group acts freely on the set of Weyl chambers. Consequently for any two Weyl chambers $C_1$ and $C_2$ there is exactly one $w\in W_R$ such that $w(C_2)=C_1$. Also, by proposition, for any pair $B_1,B_2$ of bases there is a unique $w\in W_R$ such that $w(B_2)=B_1$. $\proof$ The closed Weyl chambers form a covering of $E$ and thus $x$ belongs to some closed Weyl chamber $D$. As $W_R$ acts transitively on the set of closed Weyl chambers - cf. lemma - there is a some $w\in W_R$ (by lemma $w$ is also unique) such that $w(D)=C$, i.e. $w(x)\in C$ and therefore $W_R(x)\cap C\neq\emptyset$. However, as $D$ is not unique, $w$ may not be unique either. So assume $y=u(x)$ is another point in $C$, then both $y\colon=u(x)$ and $z\colon=w(x)$ belong to $C$ an $z=wu^{-1}(y)$; by lemma this implies $z=y$. $\eofproof$ We notice that lemma suffices to show that $W_R(x)\cap C\neq\emptyset$. lemma has only been utilized to verify that $W_R(x)\cap C$ contains exactly one point.

Integral and Dominant Elements

Given a root system $R$ in $E$ a vector $x\in E$ is said to be an integral element if for all roots $r\in R$:

$$

\la x,r^\vee\ra\colon=\frac{2\la x,r\ra}{\Vert r\Vert^2}\in\Z~.

$$

If $B$ is a base for $R$ a vector $x\in E$ is said to be a dominant element or a strictly dominant element if $x\in B^p$ or $x\in B^{p\circ}$, i.e.

$$

\forall b\in B:\quad\la x,b^\vee\ra\geq0

\quad\mbox{or}\quad

\la x,b^\vee\ra > 0

$$

Every root and thus every element in $\sum_{r\in R}\Z r$ is integral, but not every integral element is a root!For a vector $x\in E$ to be integral it suffices to check that $\la x,r^\vee\ra\in\Z$ for all $r$ in a base $B$. This is because by proposition the set $B^\vee$ is a base for the dual root system $R^\vee$ and thus for any $r\in R$ the vector $r^\vee$ is a linear combination of elements in $B^\vee$ with integer coefficients.

Obviously $x\in E$ is dominant or strictly dominant iff $x\in B^p$ or $x\in B_{p\circ}$. Moreover, for any $x\in E$ by corollary we have $|W_R(x)\cap B^p|=1$, i.e. there is a unique element $y=w(x)\in B^p$; in other words, the $W_R$-orbit of $x$ contains a unique dominant element. Given a base $B$ of a root system $R$ the element \begin{equation}\label{ideeq1}\tag{IDE1} r_0\colon=\tfrac12\sum_{r\in R^+}r \end{equation} is integral and strictly dominant: we have to check only one property: for all $b\in B$: $\la r_0,b^\vee\ra\in\N$. Indeed we will show that \begin{equation}\label{ideeq2}\tag{IDE2} \forall b\in B:\qquad \la r_0,b^\vee\ra=1. \end{equation} We split $R^+\sm\{b\}$ as follows: the first set $R_1^+$ comprises all vectors orthogonal to $b$ and the second set $R_2^+$ the vectors not orthogonal to $b$. Then we get $$ \la r_0,b^\vee\ra =\tfrac12\Big(\la b,b^\vee\ra+\sum_{r\in R_2^+}\la r,b^\vee\ra\Big)~. $$ Now by exam the reflection $R_b$ permutes $R_2^+$ and for all $r\in R_2^+$: $$ \la R_b(r),b^\vee\ra =\la r,R_b(b^\vee)\ra =-\la r,b^\vee\ra $$ i.e. $R_2^+$ can be represented as the pairwise disjoint union of pairs $\{r,R_b(r)\}$. It follows that the sum over $R_2^+$ vanishes and thus $\la r_0,b^\vee\ra=\tfrac12\la b,b^\vee\ra=1$. We use $R^+=\{e_j-e_k: j < k\}$: $$ 2r_0=\sum_{j < k}e_j-e_k=(n-1)e_1+(n-3)e_2+\cdots+(1-n)e_n~. $$

Let $B$ be a base for $R$. The dual basis to $B^\vee$ is said to be the fundamental weight system relative to $B$.

The fundamental weight system is a generating set of the convex cone $B^p$: obviously for any $b\in B$: $b^{\vee*}\in B^p$. Conversely for any $x\in B^p$ we have

$$

x=\sum\la x,b^\vee\ra b^{\vee*}

\quad\mbox{and}\quad

\la x,b^\vee\ra\geq0,

$$

Hence, normalizing a fundamental weight system we get the vertices of the $n$-simplices of the triangulation $\Sigma_n$ of $S^{n-1}$ associated to the base $B$.

In theorem we will show that all weights of finite dimensional irreducible representations of a Lie-algebra are integral.

1. $H$ is a base for the root system of $\sla(2,\C)$ satisfying $H^\vee=H$ and the dual basis is $\l_1=H/2$. Hence $\l$ is integral iff $\l\in\frac12\Z H$.

2. $H_1,H_2$ is a base for the root system of $\sla(3,\C)$ satisfying $H_j^\vee=H_j$ and the dual basis is $\l_1=Q,\l_2=Y$, i.e. charge and hypercharge. Cf. exam. Hence $\l$ is integral iff $\l\in\Z Q+\Z Y$.

By exam we know that $b_k^\vee=b_k\colon=e_k-e_{k+1}$, $k=1,\ldots,n-1$, is a base in $E\colon=\{x\in\R^n:\la x,N\ra=0\}$, where $N=e_1+\cdots+e_n$. As $b_k^\vee=b_k$ we simply need to check that $b_1^*,\ldots,b_{n-1}^*$ is indeed the dual basis of $b_1,\ldots,b_{n-1}$ in $E$.

$$

\la b_j^*,b_j\ra=1-\tfrac jn+\tfrac jn=1

$$

For $j < k$: $\la b_j^*,b_k\ra=-\tfrac jn+\tfrac jn=0$ and for $j > k$: $\la b_j^*,b_k\ra=(1-\tfrac jn)-(1-\tfrac jn)=0$.

2. $H_1,H_2$ is a base for the root system of $\sla(3,\C)$ satisfying $H_j^\vee=H_j$ and the dual basis is $\l_1=Q,\l_2=Y$, i.e. charge and hypercharge. Cf. exam. Hence $\l$ is integral iff $\l\in\Z Q+\Z Y$.

Referring to exam we conclude from the above exam that a fundamental weight system in the Cartan algebra ${\cal H}$ of traceless diagonal matrices of $\sla(n,\C)$ - the dual system to $H_1,\ldots,H_{n-1}$ - is given by

$$

\forall j=1,\ldots,n-1:\quad

\l_j\colon=(1-\tfrac jn)\sum_{l=1}^jE^{ll}-\tfrac jn\sum_{l=j+1}^nE^{ll}~.

$$

In particular for $n=2$: $\l_1=H/2$ and for $n=3$: $\l_1=(2/3)E^{11}-(1/3)(E^{22}+E^{33})=Q$ and $\l_2=(1/3)(E^{11}+E^{22})-(2/3)E^{33}=Y$.

Partial Ordering Integral Elements

Suppose $B$ is a base for $R$ in $E$. We introduce a partial order on $E$, which obviously depends on the base $B$, i.e. it depends on the choice of a positive root system $R^+$:

$$

x\preceq y:\Lrar y-x\in\sum_{b\in B}\R_0^+b=\sum_{r\in R^+}\R_0^+r~.

$$

$y\in E$ is said to be higher than $x\in E$ or $x$ is lower than $y$.

Compare definition. In this section we always assume that we`ve chosen a fixed base or a fixed set of positive roots for a root system!$\proof$ Suppose that $x$ is dominant, i.e. $x\in B^p$. As $B$ is a basis for $E$, we have a unique decomposition $$ x=\sum_{b\in B}\la x,b^*\ra b $$ where $\{b^*:b\in B\}$ denotes the dual basis. Expanding $b^*$ in terms of the basis $B$ we get: $$ b^*=\sum_{c\in B}\la b^*,c^*\ra c \quad\mbox{and therefore}\quad x=\sum_{c,b\in B}\la b^*,c^*\ra\la x,c\ra b~. $$ Now $x$ is dominant, i.e. $x\in B^p$, which means that for all $c\in B$: $\la x,c\ra\geq0$. To finish to proof we need to verify that for all $c,b\in B$: $\la b^*,c^*\ra\geq0$, which will be done in the subsequent lemma. $\eofproof$ $\proof$ We proceed by induction on the dimension $n$: For $n=2$ this is just exam. So assume the assertion is true for dimension $n\geq2$. Choose $j < k$ in $\{1,\ldots,n,n+1\}$ and select any number $m$ in $\{1,\ldots,n,n+1\}\sm\{j,k\}$ - this is possible, as $n+1\geq3$. W.l.o.g. assume $m=n+1$. Denote by $P$ the orthogonal projection onto the sub-space $F$ orthogonal to $x_{n+1}$, i.e. $$ Px=x-\frac{\la x,x_{n+1}\ra}{\Vert x_{n+1}\Vert^2}x_{n+1} $$ As for all $l\leq n$: $\la x_l^*,x_{n+1}\ra=0$, we have for all $l\leq n$: $Px_l^*=x_l^*$. Orthogonal projections are self-adjoint, hence we get $$ \forall l,m\leq n:\quad \la x_l^*,Px_m\ra=\la Px_l^*,x_m\ra=\la x_l^*,x_m\ra $$ i.e. $Px_l$, $l\leq n$, is the dual basis for the basis $x_l^*$, $l\leq n$, of $F$. Since for all $l\leq n$: $\la x_l,x_{n+1}\ra < 0$ we finally have for all $l,m\leq n$: \begin{eqnarray*} \la Px_l,Px_m\ra &=&\la x_l,Px_m\ra =\Big\langle x_l,x_m-\frac{\la x_m,x_{n+1}\ra}{\Vert x_{n+1}\Vert^2}x_{n+1}\Big\rangle\\ &=&\la x_l,x_m\ra-\frac{\la x_m,x_{n+1}\ra\la x_l,x_{n+1}\ra}{\Vert x_{n+1}\Vert^2} < \la x_l,x_m\ra < 0~. \end{eqnarray*} By induction hypothesis this implies in particular: $\la x_j^*,x_k^*\ra > 0$. $\eofproof$ For all $l\leq r$: $$ Px_l =\sum_{j,k=1}^rg^{jk}\la x_l,x_j\ra x_k =\sum_{j,k=1}^rg^{jk}g_{lj}x_k =\sum_{k=1}^r\d_{lk}x_k =x_l $$ i.e. $P|F=id$. Analogously we get $$ \la Px,x_l\ra =\sum_{j,k=1}^rg^{jk}\la x,x_j\ra\la x_k,x_l\ra =\sum_{j,k=1}^rg^{jk}g_{kl}\la x,x_j\ra =\sum_{j,k=1}^r\d_{jl}\la x,x_j\ra =\la x,x_l\ra $$ i.e. $(1-P)x\in F^\perp$. $$ \la u_j,u_k\ra =\sum_{l,m=1}^nr^{lj}r^{mk}g_{lm} =\sum_{l,m=1}^nr^{jl}g_{lm}r^{mk} =G^{-1/2}GG^{-1/2}_{jk}=\d_{jk} $$ $\proof$ 1. We know from corollary that the $W_R$-orbit $W_R(x)$ of $x$ contains a unique dominant element, which must be $x$. Split $W_R(x)$ into two sets: the first comprises all points comparable to $x$: for all these points $y$ we must have $y\preceq x$. The second set $P$ contains all points in the orbit $W_R(x)$ incomparable to $x$. Choose $p\in P$ maximal with respect to the order $\preceq$. We claim that $p\in B^p$, for otherwise there is some $b\in B$ such that $\la p,b\ra < 0$ and thus $$ R_b(p)p=p-\frac{2\la p,b\ra}{\norm b^2}b $$ would be strictly higher than $p$. If $R_b(p)$ were not in $P$, then $p\preceq R_b(p)\preceq x$, i.e. $p\notin P$. But $R_b(p)\in P$ contradicts the maximality of $p$. Therefore we must have $p\in B^p$, i.e. $p$ is dominant and therefore $p=x$.

2. If $x$ is a strictly dominant integral element, then for all $b\in B$: $\la x,b^\vee\ra\in\Z\cap\R^+=\N$. Since for all $b\in B$: $\la e_0,b^\vee\ra=1$ it follows that for all $b\in B$: $\la x-e_0,b\ra\geq0$. $\eofproof$ $\proof$ As $C$ is compact and convex and $y\notin C$ there is some $y_1\in E$ such that $$ \la y_1,y\ra > \sup\{\la y_1,c\ra:\,c\in C\}~. $$ However, $y_1$ is not necessarily dominant, but by corollary there exists $y_0=w_0(y_1)$ in the $W_R$-orbit of $y_1$, which is dominant. Now we have on the one hand $$ \sup\{\la y_0,c\ra:\,c\in C\} =\sup\{\la y_1,w_0^{-1}c\ra:\,c\in C\} =\sup\{\la y_1,c\ra:\,c\in C\}~. $$ On the other hand we get by proposition $y_0-y_1\in\sum_{r\in R^+}\R_0^+r$. Finally, as $y$ is dominant, we conclude that $$ \la y_0,y\ra-\la y_1,y\ra =\la y_0-y_1,y\ra \in\sum_{r\in R^+}\R_0^+\la r,y\ra\sbe\R_0^+ $$ $\eofproof$ $\proof$

2. Assume $y\in\convex{W_R(x)}$, then $W_R(y)\sbe\convex{W_R(x)}$ and since all elements of $W_r(x)$ are lower than $x$ we conclude: $W_R(y)\sbe[.\preceq x]$. For the converse inclusion assume that for all $w\in W_R$: $w(y)\preceq x$. But by lemma we may choose $w_0\in W_R$ such that $w_0(y)$ is dominant, which by 1. implies $w_0(y)\in\convex{W_R(x)}$, i.e. $y\in\convex{w_0^{-1}W_R(x)}=\convex{W_R(x)}$. $\eofproof$