Universal Enveloping Algebra

Uniqueness of the universal enveloping algebra

Given a Lie-Algebra $A$ an enveloping algebra of $A$ is a pair $(U,j)$, where $(U,+,.)$ is an associative algebra with unit $1$ and $j$ a linear mapping $j:A\rar U$ such that$$ \forall x,y\in A:\quad j([x,y])=j(x)j(y)-j(y)j(x), $$ i.e. $j:A\rar(U,[.,.])$ is a Lie-algebra homomorphism with bracket $[u,v]\colon=uv-vu$.

The universal enveloping algebra $({\cal U}(A),j)$ of $A$ is an enveloping algebra of $A$ with the following universal property: for any enveloping algebra $(U,\pi)$ of $A$ there is a unique associative algebra homomorphism $\Pi:{\cal U}(A)\rar U$ such that $\pi=\Pi\circ j$.

The universal property ensures uniqueness up to isomorphism: Suppose $({\cal V}(A),k)$ is another universal enveloping algebra, the there are unique associative algebra homomorphisms $\Pi:{\cal U}(A)\rar{\cal V}(A)$ and $\Phi:{\cal V}(A)\rar{\cal U}(A)$ such that $k=\Pi\circ j$ and $j=\Phi\circ k$. Thus $\Phi\circ\Pi\circ j=j$ and $\Pi\circ\Phi\circ k=k$, but the universal property says that the identity mappings are the only associative algebra homomorphisms with these properties, i.e. $\Phi\circ\Pi=id$ and $\Pi\circ\Phi=id$. Hence ${\cal V}(A)$ and ${\cal U}(A)$ are isomorphic as associative algebras.

Constructing the universal enveloping algebra

The construction of ${\cal U}(A)$ is similar to the construction of the exterior algebra or, more generally, the Clifford-algebra of a vector space $E$ with symmetric bi-linear form $B:E\times E\rar\C$: in the latter case we have instead of $j([x,y])=j(x)j(y)-j(y)j(x)$ the condition: $j(x)j(y)+j(y)j(x)=-2B(x,y)1$, where $1$ denotes the unit - the choice $B=0$ produces the exterior algebra. We start off with the construction of ${\cal U}(A)$ by repeating the definition of the tensor algebra $({\cal T}(A),+,\otimes)$ of the vector space $A$: $$ {\cal T}(A)\colon=\C\oplus A\oplus A\otimes A\oplus A\otimes A\otimes A\oplus\cdots =\C\oplus A\oplus\bigoplus_{p=2}^\infty{\cal T}_p(A) =\bigoplus_{p=0}^\infty{\cal T}_p(A) $$ with unit $1\oplus0\oplus0\cdots$. ${\cal T}(A)$ is infinite dimensional and elements in ${\cal T}_p(A)$ are called $p$-tensors or tensors of degree $p$. $A$ itself may be viewed as a sub-space of the tensor algebra by $j:A\rar{\cal T}(A)$ mapping $x$ to the $1$-tensor. The importance of the tensor algebra is based on its universal property: for any linear map $\pi:A\rar B$ into an associative algebra $B$ there is a unique associative algebra homomorphism $\Pi:{\cal T}(A)\rar B$ such that $\Pi\circ j=\pi$ which we simply write as $\Pi|A=\pi$: as $\Pi$ is a homomorphism we must have $$ \Pi(x_1\otimes\cdots\otimes x_p)\colon=\pi(x_1)\cdots\pi(x_p)~. $$ Next we define a two sided ideal $I(A)$ in ${\cal T}(A)$ generated by all elements of the form $[x,y]-x\otimes y+y\otimes x$: $$ I(A)\colon=\Big\{\sum_{j=1}^nA_j\otimes([x_j,y_j]-x_j\otimes y_j+y_j\otimes x_j)\otimes B_j: n\in\N,A_j,B_j\in{\cal T}(A)\Big\} $$ and put ${\cal U}(A)\colon={\cal T}(A)/I(A)$. As $I(A)$ is a two sided ideal ${\cal U}(A)$ is again an associative algebra. It remains to check the universal property: So let $(U,\pi)$ be any enveloping algebra, then $\pi:A\rar U$ is linear and by the universal property of the tensor algebra there is a unique associative algebra homomorphism $\Pi:{\cal T}(A)\rar U$ such that $\Pi|A=\pi$. Moreover, for all $x,y\in A$ we have $$ \Pi([x,y]-x\otimes y+y\otimes x) =\pi([x,y])-\pi(x)\pi(y)+\pi(y)\pi(x) $$ which vanishes because $\pi:A\rar(U,[.,.])$ is by assumption a Lie-algebra homomorphism. As $\Pi$ is an associative algebra homomorphism we conclude that $I(A)\sbe\ker\Pi$ and thus there is a unique associative algebra homomorphism $\wh\Pi:{\cal T}(A)/I(A)\rar U$ such that $\Pi=\wh\Pi\circ Q$, where $Q:{\cal T}(A)\rar{\cal U}(A)$ denotes the quotient map. Finally for $x\in A$ we put $$ j\colon=Q|A \quad\mbox{and get:}\quad \pi(x)=\Pi(x)=\wh\Pi(j(x))~. $$ Though $\Pi$ and $\wh\Pi$ are unique that doesn`t mean that there is only one homomorphism ${\cal T}(A)/I(A)\rar U$. So let $\vp$ be another such homomorphism satisfying for all $x\in A$: $\vp(j(x))=\pi(x)$, then $\vp\circ Q:{\cal T}(A)\rar U$ is a homomorphism such that $\vp\circ Q=\pi$, i.e. $\vp\circ Q=\wh\Pi\circ Q$; since $Q$ is onto we must have: $\vp=\wh\Pi$. This proves that $({\cal U}(A),j)$ is the universal enveloping algebra.The Poincaré-Birkhoff-Witt Theorem

In the sequel we will use the symbols $+$ and $.$ to denote addition and multiplication in the quotient algebra ${\cal U}(A)$, i.e. for $X,Y\in {\cal U}(A)$ and $Q(X)=\wh X$, $Q(Y)=\wh Y$: $$ \wh X+\wh Y=Q(X+Y),\quad \wh X\wh Y=Q(XY),\quad Q^{-1}(\wh X)=X+I(A)~. $$ For the time being we don`t know anything about the mapping $j$, which was defined as the restriction of the quotient map $Q:{\cal T}(A)\rar{\cal T}(A)/I(A)$ to $A$.

If we assume that there is a faithful representation $\pi_0:A\rar\Hom(E)$ - by exam such a representation exists if $A$ is a matrix algebra - then $j$ is injective, for $\pi_0(x)=\wh\Pi_0(j(x))$.

The Poincaré-Birkhoff-Witt Theorem will show that $j$ is injective for any finite dimensional Lie-algebra $A$. This implies that $j$ maps the subspace $A$ of ${\cal T}(A)$ isomorphically onto its image $j(A)$, so we may identify $A$ and $j(A)$!

$\proof$

The proof of the reordering lemma shows that any expression of the form $j(b_{i_1})\cdots j(b_{i_m})$ can be expressed as a linear combination of terms of the form $j(b_1)^{k_1}\cdots j(b_d)^{k_d}$; if $l > i$ we just use the commutation relation $j(b_l)j(b_i)=j(b_i)j(b_l)+j([b_l,b_i])$ and the structure constants:

$$

[b_l,b_i]=\sum_mc_{mi}^lb_m~.

$$

The proof that these vectors are linearly independent is much more involved:

We will find it convenient to write the element $j(b_1)^{k_1}\ldots j(b_d)^{k_d}$ as

$$

j(b_{n_1})\ldots j(b_{n_N}),\quad

1\leq n_1\leq\cdots\leq n_N\leq d,\quad

N\in\N_0

$$

and declare an index set ${\cal I}\colon=\{(n_1,\ldots,n_N): 1\leq n_1\leq\cdots\leq n_N\leq d,N\in\N_0\}$. We are going to construct a linear map $\d:{\cal T}(A)\rar V$ into the vector space $V$ generated by a basis $v_{(n_1,\ldots,n_N)}$. To keep the formulas more readable we omit the symbol for the multiplication $\otimes$ in the tensor algebra ${\cal T}(A)$. $\d$ should have the following two properties:

\begin{equation}\label{ueaeq1}\tag{UEA1}

\d(b_{n_1}\cdots b_{n_N})=v_{(n_1,\ldots,n_N)}~.

\end{equation}

The second property will be that for all $n\in\N$ and all $N$-tuples $n_1,\ldots,n_N\in\{1,\ldots,d\}^N$:

\begin{equation}\label{ueaeq2}\tag{UEA2}

\d(b_{n_1}\cdots b_{n_k} b_{n_{k+1}}\cdots b_{n_N}-b_{n_1}\cdots b_{n_{k+1}} b_{n_k}\cdots b_{n_N}-b_{n_1}\cdots [b_{n_k},b_{n_{k+1}}]\cdots b_{n_N})=0~.

\end{equation}

As any element in the ideal $I(A)$ is a linear combination of elements of the form

$$

b_{n_1}\cdots b_{n_k} b_{n_{k+1}}\cdots b_{n_N}-b_{n_1}\cdots b_{n_{k+1}} b_{n_k}\cdots b_{n_N}-b_{n_1}\cdots [b_{n_k},b_{n_{k+1}}]\cdots b_{n_N}

$$

the linear map $\d$ vanishes on $I(A)$ and thus induces a linear map $\wh\d:{\cal T}(A)/I(A)\rar V$ satisfying $\d=\wh\d\circ Q$. From $\d(b_I)=v_I$ we infer that $Q(b_I)$, $I\in{\cal I}$, must be linearly independent.Before embarking on the general case a few instructive cases will be elucidated; it will turn out that the existence of the map $\d$ relies basically on one relation: the Jacobi identity!

- Suppose we have two basis vectors $b_j$ and $b_k$; for $j\leq k$ \eqref{ueaeq1} dictates that: $\d(b_jb_k)=v_{(j,k)}$ and in case $j > k$ by \eqref{ueaeq2} we need to put: $$ \d(b_jb_k)\colon=\d(b_kb_j)+\d([b_j,b_k]), $$ which we simply write as $jk\colon=kj+[j,k]$, so in particular for \eqref{ueaeq2} we now write: \begin{equation}\label{ueaeq3}\tag{UEA3} n_1\cdots n_kn_{k+1}\cdots n_N-n_1\cdots n_{k+1}n_k\cdots n_N-n_1\cdots [n_k,n_{k+1}]\cdots n_N=0~. \end{equation}

- Next look at the case of a triple: $(i,j,k)\in\{1,\ldots,d\}^3$ - we assume that $\d$ is already defined on all pairs. By the first property \eqref{ueaeq1} $ijk$ is defined only for $i\leq j\leq k$.

- If $i\leq k < j$ then define $$ ijk\colon=ikj+i[j,k] $$

- If $k < i \leq j$, then: $$ ijk\colon=ikj+i[j,k],\quad ikj\colon=kij+[i,k]j,\quad\mbox{i.e.}\quad ijk=kij+[i,k]j+i[j,k] $$

- If $k < j < i$ then there are two ways: $$ ijk=ikj+i[j,k],\quad ikj=kij+[i,k]j,\quad kij=kji+k[i,j],\quad\mbox{i.e.}\quad ijk=kji+i[j,k]+[i,k]j+k[i,j] $$ Another way: $$ ijk=jik+[i,j]k,\quad jik=jki+j[i,k],\quad jki=kji+[j,k]i,\quad\mbox{i.e.}\quad ijk=kji+[i,j]k+j[i,k]+[j,k]i $$ As $\d$ must be well defined, we must have $$ 0=i[j,k]-[j,k]i+[i,k]j-j[i,k]+k[i,j]-[i,j]k =[i,[j,k]]+[[i,k],j]+[k,[i,j]] $$ which is Jacobi`s identity.

- Finally lets look at a quadruple $ijkl$. We just delineate the case $j < i\leq l < k$: $$ ijkl=ijlk+ij[k,l]=jilk+[i,j]lk+ji[k,l]+[i,j][k,l] $$ or the other way: $$ ijkl=jikl+[i,j]kl=jilk+ji[k,l]+[i,j]lk+[i,j][k,l] $$ but both expressions coincide.

Finally we define $\d(b_{n_1}\ldots b_{n_{N+1}})$ for $n_1\leq\cdots\leq n_{N+1}$ by \eqref{ueaeq1} and proceed by induction on the `index` $p$ as before. $\eofproof$ That`s the number of ways to distribute $k=\sum_{j=1}^dn_j$ undistinguishable presents among $d$ children, which is $$ {k+d-1}\choose{k} $$ Define $\d$ on the presumed basis as in the proof of theorem but instead of \eqref{ueaeq3} declare for any $(n_1,\ldots,n_N)\in\{1,\ldots,d\}^N$: $$ n_1\cdots n_kn_{k+1}\cdots n_N+n_1\cdots n_{k+1}n_k\cdots n_N+2B(n_k,n_{k+1})n_1\cdots n_{k-1}n_{k+2}\cdots n_N=0~. $$ We only handle the case $k < j < i$: $$ ijk=-ikj-2B(j,k)i,\quad -ikj=kij+2B(i,k)j,\quad kij=-kji-2B(i,j)k $$ and therefore $ijk=-kji-2B(j,k)i+2B(i,k)j-2B(i,j)k$. Following the other way: $$ ijk=-jik-2B(i,j)k,\quad -jik=jki+2B(i,k)j,\quad jki=-kji-2B(j,k)i $$ and therefore $ijk=-kji-2B(i,j)k+2B(i,k)j-2B(j,k)i$, which is exactly the same expression. Thus apart from symmetry we do not need any restriction on $B$!

2. If $\pi(x)^2+B(x,x)1=0$, then $\pi(x+y)^2+B(x+y,x+y)1=0$, i.e. $$ 0=\pi(x)^2+\pi(y)^2+\pi(x)\pi(y)+\pi(y)\pi(x)+B(x,x)+B(y,y)+2B(x,y) =\pi(x)\pi(y)+\pi(y)\pi(x)+2B(x,y) $$ Hence the homomorphism $\Pi:{\cal T}(A)\rar A$ factorizes through $\Cl(E,B)$, i.e. there is a homomorphism $\wh\Pi:\Cl(E,B)\rar A$ such that $\Pi=\wh\Pi\circ Q$. As for uniqueness suppose $\vp:\Cl(E,B)\rar A$ is another homomorphism satisfying $\vp(j(x))=\pi(x)$. Then $\vp\circ Q:{\cal T}(A)\rar A$ is a homomorphism and $\vp\circ Q(x)=\pi(x)$, i.e. $\vp\circ Q=\Pi=\wh\Pi\circ Q$. Since $Q$ is onto we conclude that $\vp=\wh\Pi$.

Verma Modules

Construction of Verma modules

An associative algebra homomorphism $\psi:{\cal U}(A)\rar\Hom(E)$ will also be called a representation of ${\cal U}(A)$. We have a sort of left-regular representation (cf. subsection) $\g:{\cal U}(A)\rar{\cal U}(A)$, $\g(X)Y\colon=XY$; it`s indeed a representation: $$ \g(X_1X_2)Y =X_1X_2Y =\g(X_1)(\g(X_2)Y) =\g(X_1)\circ\g(X_2)Y, $$ i.e. $\g$ is an associative algebra homomorphism. Now suppose $I$ is a left ideal in ${\cal U}(A)$, i.e. $I$ is invariant under $\g$, then $\g(X)(Y+I)=XY+XI\sbe XY+I$, which shows that we get a representation $\wh\g_I:{\cal U}(A)\rar\Hom({\cal U}(A)/I)$ satisfying \begin{equation}\label{vemeq1}\tag{VEM1} \wh\g_I(X)q_I(Y)=q_I(\g(X)Y), \end{equation} where $q_I:{\cal U}(A)\rar{\cal U}(A)/I$ denotes the quotient map. Restricting $\wh\g_I$ to $j(A)\sbe{\cal U}(A)$ we get a representation of the associative algebra $A$ and thus a representation of the Lie-algebra $(A,[.,.])$: for all $x,y\in A$ we indeed have $$ \wh\g_I(j([x,y])) =\wh\g_I(j(x)j(y)-j(y)j(x)) =\wh\g_I(j(x))\wh\g_I(j(y))-\wh\g_I(j(y))\wh\g_I(j(x)) =[\wh\g_I(j(x)),\wh\g_I(j(y))]~. $$ Writing $\g_I:A\rar\Hom({\cal U}(A)/I)$ for $\wh\g_I\circ j$ we see that $$ \forall x,y\in A:\quad\g_I([x,y])=[\g_I(x),\g_I(y)]~. $$ A subspace $W$ of ${\cal U}(A)/I$ is $\wh\g_I$-invariant iff $\wh\g_I(x)W\sbe W$, i.e. iff for all $x\in A$: $\g(x)(W)\sbe W+I$ or simply: $A(W+I)\sbe W+I$, which by the Poincaré-Birkhoff-Witt Theorem holds iff $E\colon=W+I=q_I^{-1}(W)$ is invariant under all $X\in{\cal U}(A)$ and this means that $E$ is a left ideal in ${\cal U}(A)$. in particular $\g_I$ is irreducible iff $I$ and ${\cal U}(A)$ are the only left ideals containing $I$, i.e. $I$ must be a maximal left ideal - cf. section.Ideals in ${\cal U}(A)$ can be found via representations $\psi:A\rar\Hom(E)$: By exam any representation $\psi:A\rar\Hom(E)$ of the Lie-algebra $A$ gives rise to a unique representation $\Psi:{\cal U}(A)\rar\Hom(E)$ satisfying $\psi=\Psi\circ j$; since $\Psi$ is an associative algebra homomorphism the space $\ker\Psi\colon=\{X\in{\cal U}(A):\Psi(X)=0\}$ is a two sided ideal in ${\cal U}(A)$. In proving the following lemma we will define for each $\l$ in the Cartan algebra $H$ of $A$ a one dimensional representation $\s_\l:B\rar\C$: let $R^+$ be a system of positive roots in $H$; put \begin{equation}\label{vemeq2}\tag{VEM2} A^+\colon=\lhull{\{A_r:\,r\in R^+\}},\quad A^-\colon=\lhull{\{A_r:\,r\in -R^+\}},\quad\mbox{and}\quad B\colon=H\oplus A^+~. \end{equation} By proposition all these sub-spaces $A^+,A^-$ and $B$ are sub-algebras of the Lie-algebra $A$. $\proof$ We define the one dimensional representation $\s_\l:B\rar\C$ by $$ \forall h\in H\,\forall x\in A^+:\quad \s_\l(h+x)\colon=\la h,\l\ra, $$ which is indeed a representation, because for $h,k\in H=A_0$, $x\in A_r$ and $y\in A_s$ we have by proposition: $$ [h+x,k+y] =[h,y]+[x,k]+[x,y] \in A_s+A_r+A_{r+s} \sbe A^+ $$ and therefore for all $x,y\in A^+$: $[h+x,k+y]\in A^+$. Hence $\s_\l([h+x,k+y])=0$ and as $\C$ is commutative, $\s_\l$ is a representation. Let $\Sigma_\l:{\cal U}(B)\rar\C$ be the unique associative algebra homomorphism extending $\s_\l$, then $\ker\Sigma_\l$ is an ideal containing $A^+$; moreover we have for all $h\in H$: $$ \Sigma_\l(h-\la h,\l\ra1) =\s_\l(h)-\la h,\l\ra\Sigma_\l(1) =\la h,\l\ra-\la h,\l\ra =0 $$ Hence $J_\l\sbe\ker\Sigma_\l$. On the other hand we get by the definition of $\Sigma_\l:{\cal U}(B)\rar\C$: $\Sigma_\l(1)=1$, i.e. $1\notin J_\l$. $\eofproof$

For any vector $\l\in H$ of $A$ let $I_\l$ be the left ideal in ${\cal U}(A)$ generated by the set of all root vectors $x\in A^+$ and the set of all vectors $h-\la h,\l\ra$, $h\in H$. The quotient $W_\l\colon={\cal U}(A)/I_\l$ is called a Verma module with weight $\l$ and quotient map $q_\l:{\cal U}(A)\rar W_\l$.

$\proof$

1. $v_0\neq 0$: We choose a basis $y_1,\ldots,y_k$ for $A^-$. Since $A=A^-\oplus B$ we infer from the Poincaré-Birkhoff-Witt Theorem that any element $X$ of ${\cal U}(A)$ can be written as

\begin{equation}\label{vemeq3}\tag{VEM3}

X=\sum_{n_1,\ldots,n_k\in\N_0}y_1^{n_1}\cdots y_k^{n_k}b(n_1,\ldots,n_k)

\end{equation}

with uniquely determined coefficients $b(n_1,\ldots,n_k)\in{\cal U}(B)$. Now assume that $X\in I_\l$, then $X$ is a linear combination of elements of the form

$$

y_1^{n_1}\cdots y_k^{n_k}b_1(n_1,\ldots,b_k)(h-\la h,\l\ra1)\quad\mbox{and}\quad

y_1^{n_1}\cdots y_k^{n_k}b_2(n_1,\ldots,b_k)x,

$$

for $b_1(),b_2()\in{\cal U}(B)$, $h\in H$ and $x\in A^+$. As

$$

b_1(n_1,\ldots,b_k)(h-\la h,\l\ra1),\ b_2(n_1,\ldots,b_k)x\in J_\l

$$

and the expansion \eqref{vemeq3} of $X$ in terms of $y_1^{n_1}\cdots y_k^{n_k}$ with coefficients $b(n_1,\ldots,n_k)\in {\cal U}(B)$ is unique, we must have for $X\in I_\l$:

$$

b(n_1,\ldots,n_k)\in J_\l\sbe{\cal U}(B)~.

$$

For $X=1$ we therefore conclude that the only non zero coefficient is $b(0,\ldots,0)$ and this coefficient must be $1$ - the unit in ${\cal U}(B)$. However, by lemma this is impossible. Hence $1\notin I_\l$, i.e. $q_\l(1)\neq0$.2. $v_0$ is a weight vector for $\g_\l$ with weight $\l$: For all $x\in A$ and all $Y\in{\cal U}(A)$ we have by \eqref{vemeq1}: $\g_\l(x)q_\l(Y)=q_\l(\g_\l(x)Y)$, this implies that for all $h\in H$: $$ \g_\l(h-\la h,\l\ra1)v_0 =\g_\l(h-\la h,\l\ra1)q_\l(1) =q_\l(\g(h-\la h,\l\ra1)1) =q_\l(h-\la h,\l\ra1) =0 $$ because $h-\la h,\l\ra1\in I_\l$. Hence for all $h\in H$: $\g_\l(h)v_0=\la h,\l\ra v_0$, which means that $v_0$ is a weight vector for $\g_\l$ with weight $\l$.

3. For all $x\in A^+$: $\g_\l(x)v_0=0$: It suffices to verify this for all $x\in A_r$, $r\in R^+$: $$ \g_\l(x)v_0 =q_\l(\g(x)1) =q_\l(x) =0~. $$ 4. $v_0$ is cyclic: Suppose that $V_0$ is an $\g_\l$-invariant sub-space of $W_\l$ containing $v_0$, then $V_0$ is $\g$-invariant because all elements in ${\cal U}(A)$ are sums of products of elements in $A$. Hence for all $X\in{\cal U}(A)$: $$ \wh\g_\l(X)v_0 =q_\l(\wh\g(X)1) =q_\l(X), $$ which shows that $V_0=W_\l$.

5. Assume to the contrary that these elements are linearly dependent, i.e. for some coefficients $b(n_1,\ldots,n_k)\in\C$: $$ \sum\g_\l(y_1)^{n_1}\cdots\g_\l(y_k)^{n_k}v_0b(n_1,\ldots,n_k)=0~. $$ By \eqref{vemeq1} his means that in ${\cal U}(A)$: $$ \sum y_1^{n_1}\cdots y_k^{n_k}b(n_1,\ldots,n_k)\in I_\l $$ By 1. this implies that all coefficients $b(n_1,\ldots,n_k)$ must lie in $J_\l$, but by lemma the only constant in $J_\l$ is zero. $\eofproof$

Let $R^+=\{r_1,\ldots,r_k\}$. If $y_1,\ldots,y_k$ is a basis of root vectors for $A^-$, then $\g_\l(y_1)^{n_1}\cdots\g_\l(y_k)^{n_k}v_0$ is a weight vector for $\g_\l$ with weight $\mu\colon=\l-n_1r_1-\cdots-n_kr_k$. Moreover, the multiplicity of $\mu$ is the number $p(\l-\mu)$ of $k$-tuples $(m_1,\ldots,m_k)\in\N_0^k$ satisfying the equation: $\sum m_jr_r=\l-\mu$. This number $p(\l-\mu)$ is called the Kostant partition function - cf. section. In particular $W_\l$ is the direct sum of its weight spaces, all of which are finite dimensional.

We know that if $v$ is a weight vector with weight $\mu$, then $\g_\l(y_r)v$ is a weight vector with weight $\mu-r$. Hence $\g_\l(y_1)^{n_1}\cdots\g_\l(y_k)^{n_k}v_0$ is a weight vector for $\g_\l$ with weight $\mu\colon=\l-n_1r_1-\cdots-n_kr_k$. By theorem the set of these vectors $\g_\l(y_1)^{n_1}\cdots\g_\l(y_k)^{n_k}v_0$ form a basis for $W_\l$, i.e. we`ve found all weights of $\g_\l:A\rar\Hom(W_\l)$ and $W_\l$ is the direct sum of its weight spaces.

Verma modules of $\sla(2,\C)$

For the Lie-algebra $A=\sla(2,\C)$ we have: $R^+=\{H\}$ and $I_\l$ is the ideal generated by $H-\la H,\l\ra$ and $X$. A basis for the Verma module $W_\l$ is $v_m\colon=\g_\l(Y)^mv_0$, $m\in\N_0$. As $\la H,H\ra=2$ it follows by induction on $m$: \begin{eqnarray*} \g_\l(H)v_m&=&(\la H,\l\ra-2m)v_m\\ \g_\l(X)v_m&=&m(\la H,\l\ra-m+1)v_{m-1}\\ \g_\l(Y)v_m&=&v_{m+1}~. \end{eqnarray*} We just verify the inductive step for $\g_\l(X)v_m$: \begin{eqnarray*} \g_\l(X)v_m &=&[\g_\l(X),\g_l(Y)]v_{m-1}+\g_l(Y)\g_\l(X)v_{m-1}\\ &=&\g_\l(H)v_{m-1}+\g_\l(Y)(m-1)(\la H,\l\ra-m+2)v_{m-2}\\ &=&(\la H,\l\ra-2(m-1))v_{m-1}+(m-1)(\la H,\l\ra-m+2)v_{m-1} =m(\la H,\l\ra+m-1)v_{m-1} \end{eqnarray*} In section we defined for any $l\in\N_0$ a representation $\psi_l:\sla(2,\C)\rar\lhull{u_{-l},u_{-l+2},\ldots,u_l}$ by: \begin{eqnarray*} \psi_l(H)u_{l-2m}&=&(l-2m)u_{l-2m},\quad m=0,\ldots,l\\ \psi_l(X)u_{l-2m}&=&m(l-m+1)u_{l-2(m-1)},\\ \psi_l(Y)u_{l-2m}&=&u_{l-2m-2}~. \end{eqnarray*} Put $v_m=u_{l-2m}$, then with $v_m=0$ for $m < 0$: \begin{eqnarray*} \psi_l(H)v_m&=&(l-2m)v_m,\\ \psi_l(X)v_m&=&m(l-m+1)v_{m-1},\\ \psi_l(Y)v_m&=&v_{m+1}~. \end{eqnarray*} Hence the representation $\g_\l$ is equivalent to $\psi_l$ provided $l=\la H,\l\ra\in\N_0$. For this value of $\la H,\l\ra$ we have $\g_\l(X)v_{l+1}=0$ and thus the subspace $V_l$ generated by $v_{l+1},v_{l+2},\ldots$ is $\g_\l$-invariant. Hence we get a representation of $\sla(2,\C)$ in the quotient space $W_l/V_l$, which is just the irreducible representation we studied in section.Irreducible Quotients of Verma Modules

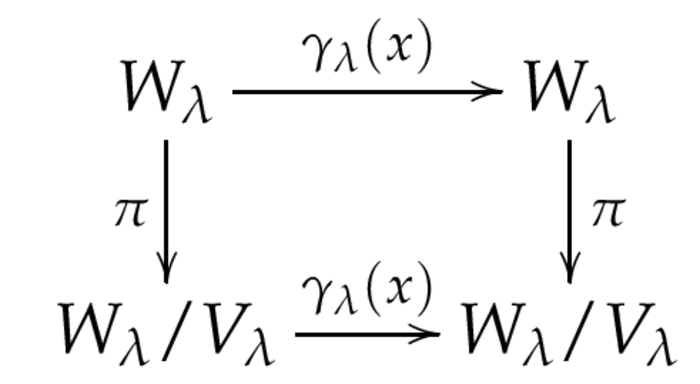

We are looking for a maximal subspace $V_\l$ of the Verma module $W_\l$ invariant under $\g_\l$. By exam any vector $v$ in $W_\l$ can be represented as a unique linear combination of weight vectors. As one of these weight vectors is $v_0$ we can speak about the $v_0$-component of $v$ - which is $v_0^*(v)$, where $v_0^*$ is just the corresponding vector to $v_0$ in the dual basis. $\proof$ We have to show that for all $v\in V_\l$ and all $x\in A$ the element $\g_\l(x)v$ is again in $V_\l$, i.e. the $v_0$-component of any product $$ \g_\l(x_1)\cdots\g_\l(x_n)\g_\l(x)v,\quad x_1,\ldots,x_n\in A^+ $$ equals $0$. By the reordering lemma this element is a linear combination of elements of the form \begin{equation}\label{iqveq1}\tag{IQV1} \g_\l(y_1)\cdots\g_\l(y_m)\g_\l(h_1)\cdots\g_\l(h_l)\g_\l(z_1)\cdots\g_\l(z_k)v \end{equation} where $y_j\in A^-$, $h_j\in H$ and $z_j\in A^+$. Since $v\in V_\l$ the $v_0$-component of $v_1\colon=\g_\l(z_1)\cdots\g_\l(z_l)v$ vanishes and thus $v_1$ is a finite sum $\sum w_\mu$ of weight vectors $w_\mu$ for weights $\mu$ strictly lower than $\l$. Now for all $h\in H$ the vector $\g_\l(h)w_\mu$ is again a weight vector with weight $\mu$ and for all $y\in A^-$ the vector $\g_\l(y)w_\mu$ is a weight vector with weight strictly lower than $\mu$. Hence the $v_0$-component of any element in \eqref{iqveq1} vanishes. $\eofproof$ Knowing that $V_\l$ is $\g_\l$-invariant, we can define the induced representation $\g_\l:A\rar\Hom(W_\l/V_\l)$ (or $\wh\g_\l:{\cal U}(A)\rar\Hom(W_\l/V_\l)$) with quotient map $\pi:W_\l\rar W_\l/V_\l$: we define for $x\in A$ and $v\in W_\l$ the expression $\g_\l(x)(\pi(v))$ by $\pi(\g_\l(x)v)$, i.e. we have the following commutative diagram: