Some Examples

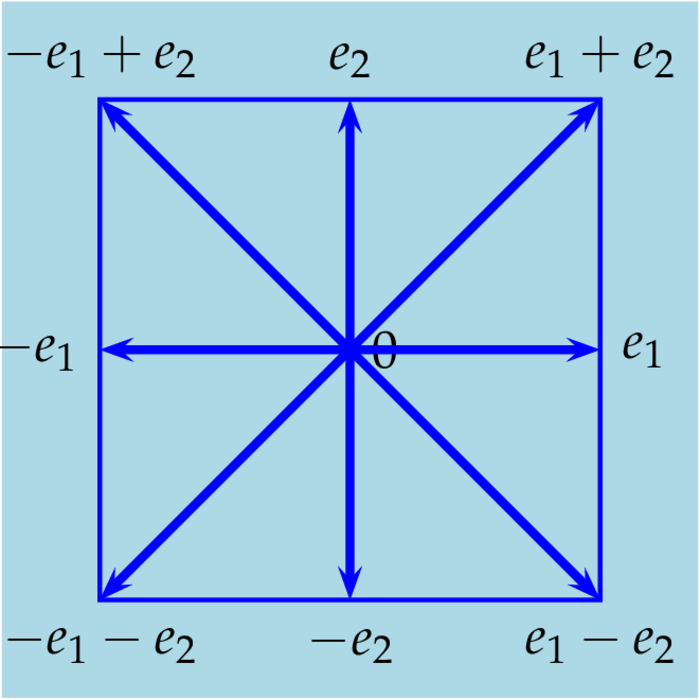

The Lie algebra $\sla(n,\C)$

As underlying real Lie-algebra we choose $\su(n)$ and a Cartan subalgebra $$ \Big\{diag\{ia_1,\ldots,ia_n\}:\,a_1,\ldots,a_n\in\R,\sum a_j=0\Big\}~. $$ Any matrix $T\in\su(n)$ is similar to a matrix in this Cartan subalgebra, i.e. there exists a matrix $U\in\SU(n)$ such that $T=\Ad(U)D$ for some matrix $D$ in this Cartan subalgebra.Anyway, $\sla(n,\C)=\su(n)_\C$ and the complexification ${\cal H}$ of the Cartan subalgebra is the set of all diagonal matrices $diag\{a_1,\ldots,a_n\}$ satisfying $a_j\in\C$ and $\sum a_j=0$. Obviously for all $H_1,H_2\in{\cal H}$: $[H_1,H_2]=0$. Thus ${\cal H}$ is commutative. Suppose for all $H\in{\cal H}$: $[X,H]=0$, then for all $j < k$: $$ XE^{jj}_{lm}=\sum_r X_{lr}E^{jj}_{rm}=X_{lj}\d_{jm} \quad\mbox{and}\quad E^{jj}X_{lm}=\sum_r E^{jj}_{lr}X_{rm}=X_{jm}\d_{jl} $$ and therefore by e.g. exam: $$ [X,E^{jj}-E^{kk}]_{lm} =X_{lj}\d_{jm}-X_{jm}\d_{jl}-X_{lk}\d_{km}+X_{km}\d_{kl} $$ For $l,m\notin\{j,k\}$ or $l=m=j$ or $l=m=k$ the right hand side equals $0$. Finally, for $l=j$ and $m=k$ we get $-2X_{jk}$ and for $l=k$ and $m=j$: $2X_{kj}$. Hence, if $[X,E^{jj}-E^{kk}]=0$ for all $j < k$, then $X$ must be diagonal, proving that ${\cal H}$ is maximal. Finally, from exam we infer that for all $X\in\su(n)$ the mapping $\ad(X)$ is skew symmetric with respect to the Euclidean inner product $\la X,Y\ra\colon=\tr(XY^*)$ - and $X^*\colon=\bar X^t$. Which implies that all mappings $\ad(H)$ are diagonalizable. Thus ${\cal H}$ is indeed a Cartan sub-algebra. In order to compute the roots of $\sla(n,\C)$ we take any $H\in{\cal H}$ and calculate $\ad(H)E^{jk}$; by e.g. exam we get: $$ \ad(H)E^{jk}=(a_j-a_k)E^{jk}, $$ i.e. for all $j\neq k$ the matrix $E^{jk}$ is a root vector and we are left to find the correspondig root $R^{jk}\in{\cal H}$ such that $a_j-a_k=\tr(HR^{jk^*})$. So obviously $$ R^{jk}=E^{jj}-E^{kk}~. $$ By theorem we know that the root system encompasses exactly $\dim\sla(n,\C)-\dim{\cal H}=n^2-1-(n-1)=n(n-1)$ roots, which is exactly the number of matrices $E^{jk}$, $j\neq k$. Hence we`ve got all the roots and all root vectors. Moreover we have $$ \la R^{jk},R^{lm}\ra\in\{0,\pm1,\pm2\}\in\Z $$ Finally for $j\neq k$ the reflection $S$ about the hyperplane $\{X:\tr(XR^{jk})=0\}$ operates on the set of all diagonal matrices by interchanging the $j$-th and the $k$-th diagonal entries. Hence the Weyl group $W$ of $\sla(n,\C)$ is isomorphic to the group of permutations $S(n)$ of $n$ elements. In particular the reflection $S$ about the hyperplane $\{X:\tr(XR^{jk})=0\}$ permutes the roots as follows: $$ \forall l\neq j\,\forall m\neq k:\quad R^{jk}\mapsto R^{kj},\quad R^{jm}\mapsto R^{km},\quad R^{lk}\mapsto R^{lj},\quad R^{lm}\mapsto R^{lm}~. $$ Summing up we got for $\sla(n,\C)$ the following:

- the Cartan algebra ${\cal H}$ is the set of all complex, traceless $n\times n$ diagonal matrices.

- We choose the Euclidean product on ${\cal H}$: $(X,Y)\mapsto\tr(XY^*)$,

- the set of roots consists of the matrices $R^{jk}\colon=E^{jj}-E^{kk}$, $j\neq k$.

- the reflection about the plane orthogonal to $R^{jk}$ maps a diagonal matrix to another diagonal matrix by interchanging the $j$-th and the $k$-th diagonal entries.

- The set of root vectors comprises all matrices $E^{jk}$, $j\neq k$.

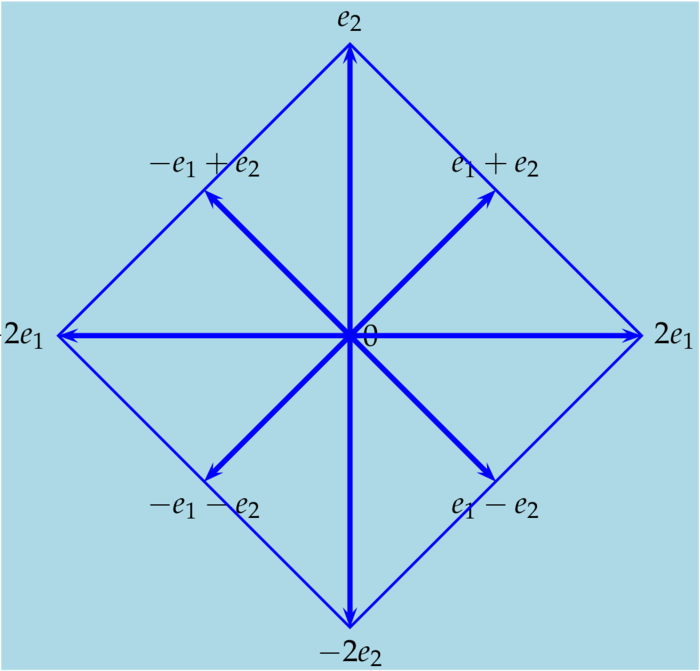

The Lie algebra $\so(2n,\C)$

Of course, the underlying real algebra is $\so(2n)\colon=\{A\in\Ma(2n,\R): A+A^t=0\}$ and a Cartan subalgebra in $\so(2n)$ is the set of block diagonal matrices $$ diag\{a_1J,\ldots,a_nJ\} \quad\mbox{where}\quad J\colon=\left(\begin{array}{cc}0&-1\\1&0\end{array}\right)\in\Ma(2,\R)~. $$ for some $a_1,\ldots,a_n\in\R$. Any matrix $T\in\so(2n)$ is similar to a matrix in this Cartan subalgebra, i.e. there exists a matrix $U\in\SO(2n)$ such that $T=\Ad(U)D$ for some matrix $D$ in this Cartan subalgebra. By definition $\so(2n,\C)\colon=\so(2n)_\C=\{A\in\Ma(2n,\C): A+A^t=0\}$ and the complexification ${\cal H}$ of the Cartan subalgebra is the set of all block diagonal matrices $$ diag\{a_1J,\ldots,a_nJ\} \quad\mbox{where}\quad a_1,\ldots,a_n\in\C~. $$ As $J^2=-1$ and $J^*=-J$ it follows that $H_1H_2^*=diag\{a_1\bar b_1I,\ldots,a_n\bar b_nI\}$ where $I$ is the identity in $\Ma(2,\C)$. Defining the Euclidean product on $\so(2n,\C)$ by $\la X,Y\ra\colon=\frac12\tr(XY^*)$, we make all mapping $\ad(H)$, $H\in{\cal H}$, diagonalizable. Moreover ${\cal H}$ is commutative and for ${\cal H}$ to be a Cartan sub-algebra only maximality needs to be proved: So suppose $X\in\so(2n,\C)$ satisfies $[X,H]=0$ for all $H\in{\cal H}$. Writing $X$ as an $n\times n$ block matrix bith $2\times2$ blocks $X_{jk}$ and denoting by $H^j\in{\cal H}$ the block diagonal matrix with $a_j=1$ and for $l\neq j$: $a_l=0$, we get $$ (XH^j)_{lm}=\sum_r X_{lr}H^j_{rm}=X_{lj}H^j_{jm} \quad\mbox{and}\quad (H^jX)_{lm}=\sum_r H^j_{lr}X_{rm}=X_{jm}H^j_{lj}~. $$ which implies: $$ [X,H^j]_{lm} =X_{lj}H^j_{jm}-X_{jm}H^j_{lj} $$ For $l,m\neq j$ or $l=m=j$ this equals $0$. For $l=j\neq m$: $-X_{jm}E^{jj}$ and for $m=j\neq l$ we get: $X_{lj}H^j_{jj}$. As $H^j_{jj}=J$ is invertible, we conclude that $X$ must be a block diagonal matrix, each block a $2\times2$ matrix. However, such a matrix in $\so(2n,\C)$ must be an element of ${\cal H}$. Hence ${\cal H}$ is a Cartan algebra. Next we want to find root vectors $X\in\so(2n,\C)$; again we assume $j < k$ and write $X$ as an $n\times n$ block matrix consisting of $2\times2$ blocks, but this time we assume that the only non-zero block is the $(j,k)$ block, which we will denote by $C\in\Ma(2,\C)$ - and of course the $(k,j)$ block, which must equal $-C^t$. For any $H\in{\cal H}$ we have $[H,X]_{lm}=0$ if $l\neq j$ and $m\neq k$ (or $l\neq k$ and $m\neq j$): $$ [H,X]_{jk} =H_{jj}C-CH_{kk} $$ Now put $$ C=\left(\begin{array}{cc}a&b\\c&d\end{array}\right) $$ Then the equation $[H,X]=\l X$ comes down to the matrix equation $$ \left(\begin{array}{cc}-a_kb-a_jc&-a_jd+a_ka\\-a_kd+a_ja&a_kc+a_jb\end{array}\right) =\l\left(\begin{array}{cc}a&b\\c&d\end{array}\right), $$ which in turn is an eigen-value equation: $$ \left(\begin{array}{cccc} 0&-a_k&-a_j&0\\ a_k&0&0&-a_j\\ a_j&0&0&-a_k\\ 0&a_j&a_k&0 \end{array}\right) \left(\begin{array}{c} a\\ b\\ c\\ d \end{array}\right) =\l \left(\begin{array}{c} a\\ b\\ c\\ d \end{array}\right) $$ This gives us four matrices $C$: \begin{equation}\label{rooeq10}\tag{ROO10} \left(\begin{array}{cc}1&-i\\i&1\end{array}\right),\quad \left(\begin{array}{cc}1&i\\-i&1\end{array}\right),\quad \left(\begin{array}{cc}1&-i\\-i&-1\end{array}\right),\quad \left(\begin{array}{cc}1&i\\i&-1\end{array}\right),\quad \end{equation} with eigen-values $\l$: $$ -i(a_j-a_k),\quad i(a_j-a_k),\quad i(a_j+a_k),\quad -i(a_j+a_k)~. $$ Finally we get for each pair $j < k$ four roots $R$ by solving: $\l=\frac12\tr(HR^*)$. Put $R=diag\{b_1J,\ldots,b_nJ\}$, then $R^*=diag\{-\bar b_1J,\ldots,-\bar b_nJ\}$ and $\frac12\tr(HR^*)=\sum_la_l\bar b_l$. It follows that $b_l=0$ for all $l\notin\{j,k\}$ and $$ b_j=i,b_k=-i\quad\mbox{or}\quad b_j=-i,b_k=i\quad\mbox{or}\quad b_j=-i,b_k=-i\quad\mbox{or}\quad b_j=i,b_k=i~. $$ These roots will be denoted by $R^{jkp}$ for $j < k$ and $p=1,2,3,4$. Thus typical roots are - we put $j=1$ and $k=2$: $$ diag\{iJ,-iJ,0,\ldots,0\},\quad diag\{-iJ,iJ,0,\ldots,0\},\quad diag\{-iJ,-iJ,0,\ldots,0\},\quad diag\{iJ,iJ,0,\ldots,0\}~. $$ As $\dim\so(2n,\C)=2n(2n-1)/2$ and $\dim{\cal H}=n$ we must have $(2n-1)-n=2n(n-1)$ root vectors. On the other hand we`ve found $4n(n-1)/2=2n(n-1)$ root vectors $R^{jkp}$, $j < k\in\{1,\ldots,n\}$ and $p\in\{1,2,3,4\}$, i.e. we`ve got all root vectors! For $\{l,m\}\cap\{j,k\}=\emptyset$ we have $\la R^{jkp},R^{lmq}\ra=0$; for $l=j$ and $m=k$ the four root vectors $R^{jkp}$, $p=1,2,3,4$, form an equilateral quadrangle; if $\{l,m\}\cap\{j,k\}$ contains exactly one point, then $$ \la R^{jkp},R^{lmq}\ra=\pm1~. $$

The Lie algebra $\so(2n+1,\C)$

We get a Cartan sub-algebra of $\so(2n+1)$ by taking all elements of the Cartan sub-algebra of $\so(2n)$ augmented by one row and one column of zeros. As in the previous subsection it can be proved that this is indeed a Cartan sub-algebra of $\so(2n+1)$. Cartan sub-algebra of $\so(2n+1)$ is clearly isomorphic to the Cartan sub-algebra of $\so(2n)$. However there are additional roots and additional root vectors. Let`s assume these additional root vectors have the following form: $$ \left(\begin{array}{cc} 0&-x^t\\ x&0 \end{array}\right) $$ for some $x=(x_1,\ldots,x_{2n})\in\C$. Thus for all $H\in{\cal H}\sbe\so(2n,\C)$: $$ \left(\begin{array}{cc} H&0\\ 0&0 \end{array}\right) \left(\begin{array}{cc} 0&-x^t\\ x&0 \end{array}\right) - \left(\begin{array}{cc} 0&-x^t\\ x&0 \end{array}\right) \left(\begin{array}{cc} H&0\\ 0&0 \end{array}\right) =\l \left(\begin{array}{cc} 0&-x^t\\ x&0 \end{array}\right) $$ i.e. $$ \left(\begin{array}{cc} 0&-Hx^t\\ 0&0 \end{array}\right) - \left(\begin{array}{cc} 0&0\\ xH&0 \end{array}\right) = \left(\begin{array}{cc} 0&-Hx^t\\ -xH&0 \end{array}\right) = \l \left(\begin{array}{cc} 0&-x^t\\ x&0 \end{array}\right) $$ As $H^t=-H$ we end up in solving $Hx^t=\l x^t$. Now the eigen-values of $J$ are $\pm i$ with eigen-vectors $(1,i)^t$ and $(1,-i)^t$ and thus the eigen-values of $H=diag\{a_1J,\ldots,a_nJ\}$ are $\pm ia_1,\ldots,\pm ia_n$ with eigen-vectors $$ (a_1,ia_1,0,\ldots,0,0)^t,\ldots(0,0,\ldots,0,a_n,ia_n)^t \quad\mbox{and}\quad (a_1,-ia_1,0,\ldots,0,0)^t,\ldots(0,0,\ldots,0,a_n,-ia_n)^t~. $$ Finally, $ia_j=\frac12\tr(HR^{j*})$ and $R^j=diag\{b_1J,\ldots,b_nJ\}$ implies $b_l=0$ for all $l\neq j$ and $b_j=i$. Hence the coresponding roots are: $\pm iH^j$. We`ve found $2n^2=2n(n-1)+2n=(2n+1)2n/2-n=\dim\so(2n+1,\C)-\dim{\cal H}$ roots, which means we`ve got all!

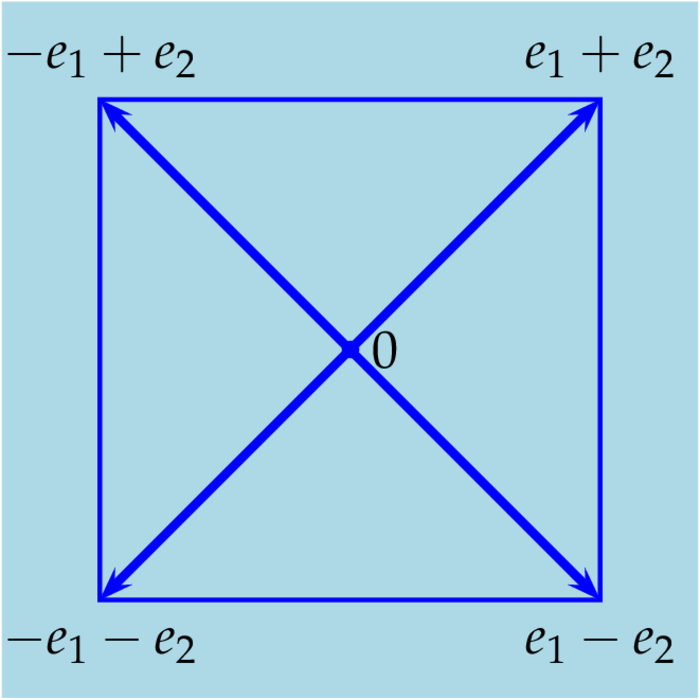

The Lie algebra $\spa(n,\C)$

First, we define the Lie algebra $\spa(n,\C)=\{X\in\Ma(2n,\C): X^t=JXJ\}$ where $J\in\Ma(2n,\C)$ now denotes the block matrix $$ J\colon=\left(\begin{array}{cc}0&-1\\1&0\end{array}\right)~. $$ The intersection $\spa(n,\C)\cap\su(2n)$ is called the compact symplectic algebra and its complexification coincides with $\spa(n,\C)$. Writing $X\in\Ma(2n,\C)$ as block matrix $$ X=\left(\begin{array}{cc}A&B\\C&D\end{array}\right) \quad\mbox{then $X\in\spa(n,\C)$ iff}\quad B^t=B,C^t=C,D=-A^t~. $$ and $X\in\spa(n)$ iff $$ \bar A^t=-A,B^t=B,C=-\bar B^t,D=-A^t~. $$ the conditions $\bar D^t=-D$ and $C^t=C$ follow from these. Thus we need to choose $A\in\Ma(n,\C)$ arbitrary and $B\in\Ma(n,\C)$ symmetric and put: $C=-\bar B^t$ and $D=-A^t$, i.e. the dimension of $\spa(n,\C)$ is $n^2+n(n+1)$. The Cartan sub-algebra ${\cal H}$ will be the complexification of the sub-space $$ \{diag\{ia_1,\ldots,ia_n,-ia_1,\ldots,-ia_n\}:a_1,\ldots,a_n\in\R\} $$ which is of course commutative and its dimension is $n$. Taking the Euclidean product $\tr(XY^*)/2$ we have for all $H\in{\cal H}$: $\ad(H)^*=\ad(H^*)=-\ad(H)$ and thus all mappings $\ad(H)$ are diagonalizable. Finally its maximal, for suppose $B,C\in\Ma(n,\C)$ are symmetric and $A\in\Ma(n,\C)$, then \begin{equation}\label{rooeq11}\tag{ROO11} H\colon=\left(\begin{array}{cc}D&0\\0&-D\end{array}\right),\quad X\colon=\left(\begin{array}{cc}A&B\\C&-A^t\end{array}\right),\quad [H,X]=\left(\begin{array}{cc}[D,A]&-BD-DB\\CD+DC&-[D,A]^t\end{array}\right) \end{equation} Hence $[H,X]=0$ iff $A$ is diagonal and for all diagonal matrices $D\in\Ma(n,\C)$: $CD+DC=BD+DB=0$, which holds if and only if $B=C=0$. Hence $X\in{\cal H}$ and therefore ${\cal H}$ is a Cartan sub-algebra. Next we need to find $n^2+n(n+1)-n=2n^2$ root vectors $X$ and roots $R$: 1. According to subsection we should try for $j\neq k$: $$ X\colon=\left(\begin{array}{cc}E^{jk}&0\\0&-E^{kj}\end{array}\right) $$ By the previous formula \eqref{rooeq11} for the commutator we get: $$ \ad(H)X =\left(\begin{array}{cc}[D,E^{jk}]&0\\0&-[D,E^{jk}]^t\end{array}\right) $$ and by exam this equals $$ (D_{jj}-D_{kk})\left(\begin{array}{cc}E^{jk}&0\\0&-E^{kj}\end{array}\right) =(D_{jj}-D_{kk})X~. $$ The associated root $R\in{\cal H}$ is determined by $\frac12\tr(HR^*)=D_{jj}-D_{kk}$, i.e. $$ R=\left(\begin{array}{cc}E^{jj}-E^{kk}&0\\0&-E^{jj}+E^{kk}\end{array}\right)~. $$ As the off diagonal entries of any root vector $X$ must be symmetric matrices we will try the simplest: $$ X\colon=\left(\begin{array}{cc}0&E^{jk}+E^{kj}\\0&0\end{array}\right) \quad\mbox{and}\quad Y\colon=\left(\begin{array}{cc}0&0\\E^{jk}+E^{kj}&0\end{array}\right)~. $$ Putting $E\colon=E^{jk}+E^{kj}$ we get by \eqref{rooeq11}: $$ [H,X]=\left(\begin{array}{cc}0&-ED-DE\\0&0\end{array}\right) \quad\mbox{and}\quad [H,Y]=\left(\begin{array}{cc}0&0\\ED+DE&0\end{array}\right) $$ Finally $ED+DE=(D_{jj}+D_{kk})E$ and therefore $$ [H,X]=(D_{jj}+D_{kk})X \quad\mbox{and}\quad [H,Y]=-(D_{jj}+D_{kk})Y $$ i.e. $X$ and $Y$ are root vectors with roots $$ \left(\begin{array}{cc}E^{jj}+E^{kk}&0\\0&-E^{jj}-E^{kk}\end{array}\right) \quad\mbox{and}\quad \left(\begin{array}{cc}-E^{jj}-E^{kk}&0\\0&E^{jj}+E^{kk}\end{array}\right) $$ Together we`ve found $(n^2-n)+2(n(n+1)/2)=2n^2$ of $\spa(n,\C)$, which means we`ve found all roots.